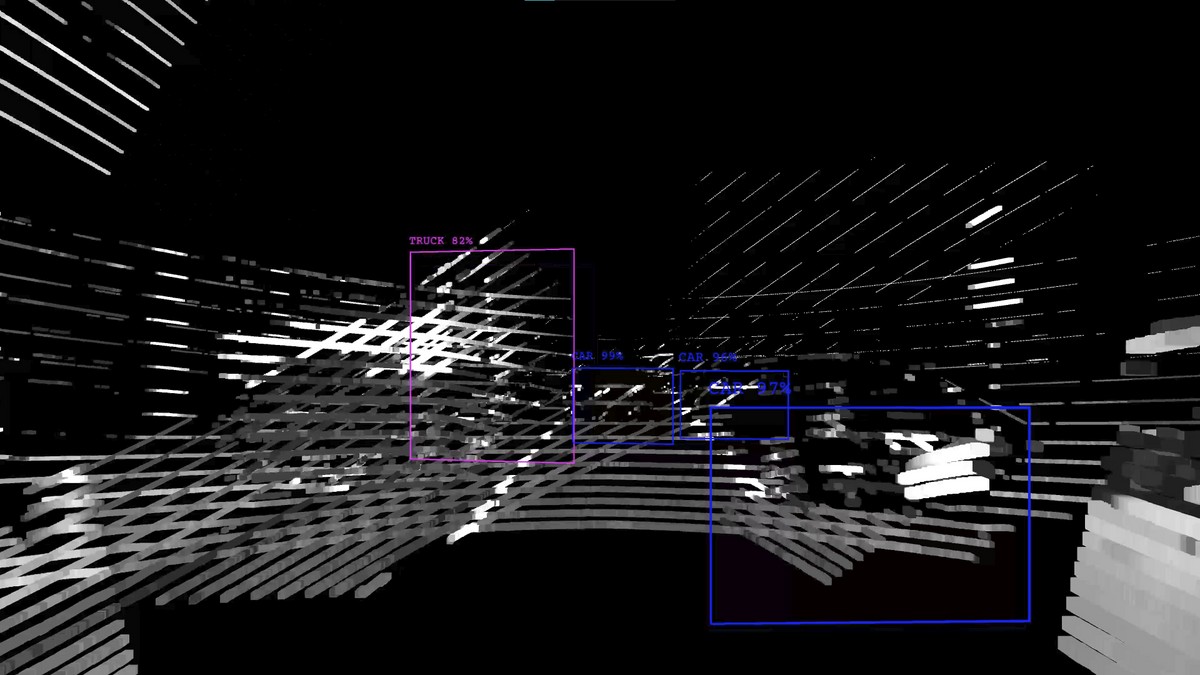

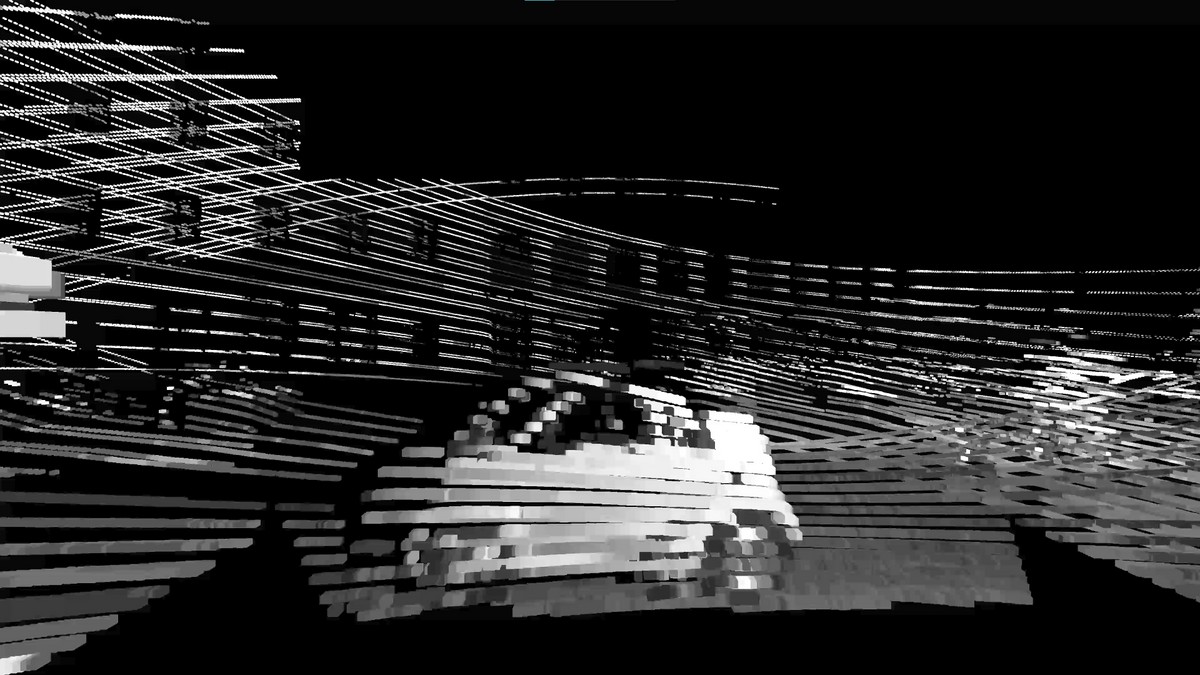

AUTO-NOM. Ich sehe was, das du nicht siehst. VR visualization of urban autonomous driving data

Leon Döring, 2022Utilizing open-source research data for autonomous vehicles, AUTO-NOM investigates how a self-driving car perceives the urban landscape, and how us humans can reshape our perception of the city by looking at it through the lens of an autonomous vehicle. In this Virtual Reality experience, the viewer takes a 15 minute ride through central Munich from the point of view of an autonomous research vehicle. What they see however, is a visualization of the point-cloud data the car’s 3D sensors have recorded. This data, corresponding to hundreds of gigabytes, is represented by hundreds of thousands of small monochromatic cubes, which results in an abstract, three-dimensional, 360 degree image of the car’s surroundings. In addition, AI object recognition annotations have been layered on top of this 3D animation. This was done using AI image processing software on the car’s 2D camera footage, replicating the way an autonomous vehicle algorithmically processes its environment. Further non-visual sensor information such as acceleration data has been transformed into an underlying soundscape using sonification software. Viewing an urban environment in the way an autonomous vehicle would, gives us the opportunity to look at the city differently and re-evaluate. For example, one might interpret the way humans morph into indistinguishable silhouettes, while the shape of cars and other vehicles stay relatively detailed and recognizable, as mirroring the hierarchy of a typical car-centric city. While possibly being safer than human drivers in the future, the need of robotic cars for error-free assessment of a situation might require more clean, segregated traffic infrastructure. This could conflict with the nature of many European city centers, possibly undoing recent progress of making urban infrastructure less car-centric. The original format of this project is a real-time VR experience using the Unity game engine running on a high-end PC. To make it accessible without the need for powerful hardware, it has been submitted as a high-resolution 360 degree 3D video and as such is viewable on a number of devices, including standalone VR headsets and mobile devices. This project originated as a student submission for a class at the Institute of Media and Design (IMD) of Technische Universität Braunschweig and has been exhibited at Architekturgalerie München in June 2022. It uses data from the Audi Autonomous Driving Dataset (A2D2) licensed under Creative Commons, see https://a2d2.audi. The authors are not affiliated with Audi AG in any way.

Links

Authors

Project Partner

Institute of Media and Design TU Braunschweig, imd.tu-bs.de